How Much RAM Do AI Workloads Really Need?

Artificial intelligence initiatives are expanding rapidly across the United States. From internal automation tools to production-grade large language models, enterprises are investing heavily in compute infrastructure. Yet one question consistently challenges CTOs and AI teams: how much RAM for AI workloads is actually required?

Memory planning is no longer a secondary consideration. Insufficient RAM limits GPU efficiency, slows model training, and constrains inference throughput. Overprovisioning, however, can inflate capital expenditure unnecessarily. As a trusted U.S. memory supplier, Ram Exchange supports enterprise AI infrastructure planning with reliable inventory and market insight.

This guide provides a practical, data-driven framework to determine RAM requirements for AI workloads, including AI server memory planning, GPU RAM requirements, and large-scale LLM infrastructure design.

Why RAM Planning Is Critical in AI Environments

AI workloads differ fundamentally from traditional enterprise applications. Databases, web services, and virtual machines typically scale memory in predictable increments. AI systems scale memory based on:

Model parameter count

Dataset size

Batch size

Training parallelism

Inference concurrency

Unlike CPU-bound systems, AI clusters must balance system RAM, GPU VRAM, and storage throughput carefully. Memory bottlenecks reduce GPU utilization, wasting high-cost accelerators.

Consumer oriented advice like “32 GB is enough for AI” can mislead enterprise planning, where 70 percent of deployments already need more than 16 GB RAM to avoid bottlenecks and often much more.

In short, RAM for AI workloads directly influences performance, cost efficiency, and scalability.

Understanding the Three Memory Layers in AI Infrastructure

When planning AI infrastructure, it is important to distinguish between three primary memory categories:

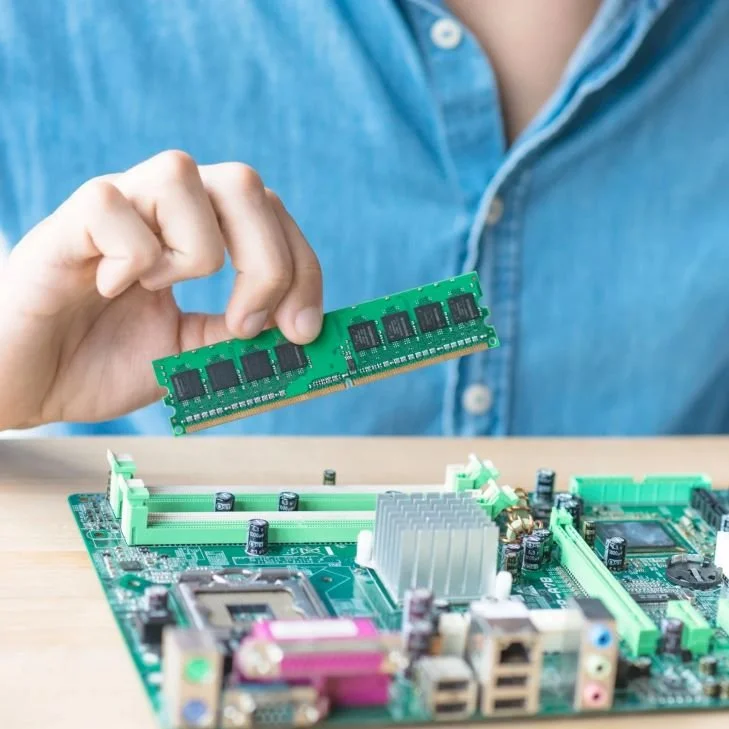

System RAM

Installed in the server motherboard and used for data preprocessing, buffering, orchestration, and CPU tasks.

GPU VRAM

Embedded on GPUs and used for model parameters, tensors, and compute operations.

Storage Caching Layers

NVMe or SSD storage supporting dataset streaming and checkpointing.

CTOs must consider how AI server memory interacts with GPU RAM requirements. Underprovisioned system RAM can throttle data pipelines before GPUs even begin computation.

RAM Requirements by AI Workload Type

Different AI workloads demand significantly different memory configurations.

1. Small to Mid-Sized Machine Learning Models

Examples include recommendation engines, predictive analytics, and structured data classification.

Typical configuration:

128 GB to 256 GB system RAM

GPUs with 16 GB to 48 GB VRAM

Moderate dataset caching

These environments prioritize balanced compute rather than extreme scale.

2. Large Language Model Training

LLM infrastructure introduces exponential scaling in memory demand. Model parameters alone can reach tens or hundreds of billions.

For example:

A 7 billion parameter model may require 140 GB to 200 GB of GPU memory during training.

A 70 billion parameter model may require distributed training across multiple GPUs with terabytes of aggregate memory.

In such environments:

System RAM often ranges from 512 GB to 2 TB per node.

GPU VRAM requirements may exceed 80 GB per accelerator.

High-speed interconnects and memory bandwidth become critical.

3. LLM Inference at Scale

Inference memory requirements depend on concurrency and latency expectations.

A single model instance may require 40 GB to 80 GB of GPU memory, but serving thousands of concurrent users demands replication across nodes.

System RAM must support:

Model loading buffers

Tokenization processes

Session management

Caching layers

Inference clusters frequently deploy 256 GB to 1 TB of RAM per server depending on throughput goals.

Sample AI Infrastructure Memory Planning Table

| Workload Type | System RAM per Node | GPU VRAM per Accelerator | Typical Use Case |

|---|---|---|---|

| Basic ML Training | 128–256 GB | 16–48 GB | Structured data models |

| Mid-Scale LLM Training | 512 GB–1 TB | 40–80 GB | 7B–13B parameter models |

| Large-Scale LLM Training | 1–2 TB | 80 GB+ | 30B–70B parameter models |

| LLM Inference Clusters | 256 GB–1 TB | 40–80 GB | Real-time AI services |

These ranges vary depending on architecture and optimization strategies, but they provide a baseline for AI infrastructure planning.

The Relationship Between GPU RAM Requirements and System RAM

A common misconception is that GPU memory alone determines AI capability. In reality, GPU RAM requirements must be supported by sufficient system memory.

For example:

Data preprocessing pipelines rely heavily on CPU RAM.

Distributed training requires large communication buffers.

Dataset sharding and caching can consume hundreds of gigabytes.

If system RAM is insufficient, GPUs idle while waiting for data. This results in underutilized accelerators and higher operational costs.

For organizations deploying multi-GPU servers, memory ratios often range between 2:1 and 4:1 for system RAM relative to total GPU VRAM.

Budgeting for AI Server Memory

Memory can represent 20 percent to 35 percent of total AI server hardware cost. As server densities increase, that percentage may grow further.

Consider this simplified example:

| Configuration | Estimated RAM Cost | Impact on Budget |

|---|---|---|

| 512 GB Server | Moderate | Balanced performance |

| 1 TB Server | High | Increased scalability |

| 2 TB Server | Very High | Supports large distributed training |

Choosing between these configurations depends on:

Model growth forecasts

Expected concurrency

Lifecycle duration

Upgrade flexibility

CTOs should project memory requirements at least 24 to 36 months ahead to avoid premature infrastructure replacement.

DRAM prices for server memory have risen significantly and are projected to remain elevated through at least 2026, especially for high density DDR5.

LLM Infrastructure Scaling Considerations

Large language model infrastructure introduces additional complexity.

Key planning factors include:

Parameter Growth

Models are increasing in size annually.

Context Window Expansion

Larger context windows require more memory during inference.

Fine-Tuning and Retrieval Augmentation

Additional embeddings and vector databases increase memory footprint.

Multi-Tenant Serving

Hosting multiple clients on shared infrastructure requires higher aggregate RAM.

Planning RAM for AI workloads requires anticipating future scaling, not just current deployment.

Avoiding Overprovisioning

While underprovisioning limits performance, overprovisioning can waste capital.

To avoid excess capacity:

Benchmark pilot workloads first

Measure GPU utilization rates

Monitor memory pressure metrics

Plan modular server expansions

Phased deployment strategies allow organizations to expand memory capacity as usage grows.

Risk Management in Memory Procurement

AI infrastructure planning must account for supply and availability factors.

DRAM markets can experience volatility due to demand surges from hyperscale data centers and AI expansion. CTOs should consider:

Long-term procurement planning

Supplier diversification

Compatibility with next-generation CPUs and GPUs

Future Trends in RAM for AI Workloads

Over the next several years, AI workloads are expected to:

Increase model parameter sizes

Expand real-time inference applications

Integrate edge AI deployments

Demand higher memory bandwidth

As LLM infrastructure becomes central to enterprise operations, system memory density per server is likely to continue rising.

CTOs who treat memory planning as a strategic discipline will gain operational efficiency and cost predictability.

Conclusion: Right‑Sizing RAM for AI Is a Strategic Advantage

There is no single magic number for how much RAM AI workloads really need, but clear patterns exist. Small models and lab setups can function with 32–64 GB RAM, while serious LLM infrastructure, high concurrency inference, and training nodes quickly push into the 256 GB–1 TB range per server. GPU RAM requirements remain critical, but without sufficient system RAM to feed data, manage context, and support retrieval and orchestration, expensive accelerators sit underutilized. For CTOs and AI teams in the United States, treating RAM as a first class part of AI infrastructure planning is essential for both performance and cost control.

Ram Exchange helps turn these sizing principles into practical configurations by supplying server grade DRAM across densities and generations, and by integrating memory sourcing with IT asset disposition to keep AI infrastructure agile across refresh cycles. To align your AI server memory and LLM infrastructure with real workload demands, connect with the team via the contact page for tailored guidance.

FAQs

How much system RAM do I need for a single AI server?

For basic models and low concurrency, 64–128 GB may be enough, but most serious AI servers in 2025–2026 start at 256 GB and scale up to 512 GB or more.How do GPU RAM requirements relate to system RAM?

GPU VRAM holds model parameters and activations, while system RAM feeds data pipelines and orchestration. Both must be sized together so GPUs never starve and CPU services do not thrash or swap.What RAM is needed for running 7B–13B parameter LLMs?

You can experiment with 7B–13B models on 32–64 GB RAM, but for production inference with higher concurrency, 64–128 GB RAM or more is recommended per node.How much RAM should I plan for 70B parameter models?

Full precision 70B models can require around 70–140 GB RAM just for parameters, with production deployments often using 256–512 GB system RAM plus large GPU VRAM for smooth operation.Does retrieval augmented generation (RAG) significantly change RAM needs?

Yes. RAG adds vector databases, caches, and additional context management that can push RAM needs into the 256–512 GB range or higher, depending on index size and concurrency.How can Ram Exchange help with planning RAM for AI workloads?

Ram Exchange provides server grade DRAM across capacities and generations, helping AI teams right‑size node memory and adapt over time through ITAD and lifecycle‑aware sourcing strategies.