Server Memory Planning for AI and High-Performance Computing

For data centers in the United States that support artificial intelligence and high-performance computing (HPC) workloads, server RAM planning is one of the most critical infrastructure considerations. Modern AI models and HPC applications demand ever-increasing memory capacity, higher bandwidth, and careful architectural balance between CPU and memory subsystems.

According to IDC, worldwide spending on AI systems is expected to reach $97.9 billion in 2024, underscoring rapid enterprise adoption of AI infrastructure. These investments require scalable memory strategies that deliver performance without unnecessary cost overruns.

In this technical deep dive, we explain best practices for enterprise server memory planning, discuss specific HPC RAM requirements, and provide practical approaches for data center decision making. We also highlight how leading memory sourcing partners like RAM Exchange can support memory procurement and lifecycle planning for complex environments.

Why Server RAM Planning Is Different for AI and HPC

AI and HPC workloads push memory systems in ways that traditional enterprise applications do not.

Key differences:

Much higher DRAM content per node

AI servers with multiple accelerators routinely ship with 2–4 TB of DDR5 system RAM, driven by massive data pipelines, large batch sizes, and long context windows.

IDC notes that overall DRAM and NAND supply growth in 2026 (around 16–17 percent year on year) will lag behind AI driven demand growth, keeping memory tight and expensive.

Memory bandwidth as a limiting factor

HPC studies show that for many scientific applications, memory bandwidth congestion, not compute, is the primary bottleneck, and that system performance and energy cost are highly sensitive to memory subsystem design.

Heterogeneous memory hierarchies

Modern AI servers combine several memory types: HBM on GPUs, DDR5 for CPUs, and sometimes LPDDR for specialized accelerators, creating complex capacity and bandwidth planning problems.

This means server RAM planning must go beyond “how many gigabytes per host” and consider how DRAM capacity and bandwidth interact with GPUs, networks, and storage.

Core Principles of Server RAM Planning

Security and capacity goals differ by organization, but several technical principles apply widely in AI and HPC data centers.

Balance GPU and CPU memory

A node with 4–8 GPUs and only 256 GB of DRAM will struggle to keep accelerators fed in multi tenant, high concurrency AI workloads.

As a rough pattern, multi GPU AI servers tend to need system DRAM in the 3–4x range of total GPU HBM/VRAM capacity to avoid starving accelerators.

Plan for bandwidth, not just capacity

High capacity alone is not enough: HPC research shows that memory bandwidth saturation can dominate performance, and that system balance is critical for efficiency.

Use higher clocked DDR5 and multi channel configurations to match memory bandwidth to core and GPU counts.

Segment nodes by workload

Instead of designing one “universal” configuration, plan separate profiles:

AI training nodes

AI inference nodes

HPC simulation nodes

General purpose compute

Each profile will have different RAM and bandwidth requirements.

Align memory strategy with DRAM market realities

Analysts expect AI and server applications to consume roughly 70 percent of high end DRAM by 2026, with DRAM contract prices having risen by over 50 percent in Q4 2025 and projected to climb up to 70 percent through 2026.

Over the next 2–3 years, memory will remain a strategic, expensive resource that must be forecasted and purchased deliberately.

Typical RAM Profiles for AI and HPC Servers

Different workloads lead to different baseline RAM requirements.

Indicative Server RAM Planning by Workload (Per Node)

| Workload type | Typical CPU DRAM range (per node) | Notes |

|---|---|---|

| Traditional enterprise (VMs, web) | 128–256 GB | Standard virtualization, web, light DB. |

| Mid range analytics / data warehouse | 256–512 GB | In memory analytics and caching. |

| AI inference (small to mid LLMs) | 256–512 GB | Multi GPU nodes, moderate concurrency. |

| AI inference (large LLMs, RAG) | 512 GB–1 TB | Long context windows, vector DBs, high concurrency. |

| AI training (multi GPU, 70B+ models) | 1–2 TB | Data pipelines, sharding, checkpoints. |

| HPC simulation (memory intensive) | 512 GB–2 TB | Finite element, CFD, large scale scientific codes. |

These are broad ranges, not hard rules, but they highlight that AI and HPC routinely push memory far beyond what traditional enterprise servers needed.

AI Server Memory: Key Design Considerations

When planning server RAM for AI, think in terms of end to end pipelines rather than just model size.

Data staging and preprocessing

ETL, feature extraction, tokenization, and augmentation often run on CPUs and require large memory pools, especially when supporting many concurrent jobs.

Model serving and context handling

For LLMs, memory stores prompts, token sequences, and intermediate states while requests are processed.

Longer context windows and higher batch sizes quickly increase DRAM consumption.

Retrieval augmented generation (RAG)

RAG pipelines maintain vector indexes and document caches that live in RAM or in memory optimized data stores, adding significant DRAM needs per node.

Orchestration, logging, and observability

Service meshes, autoscalers, logging, tracing, and metrics collectors all consume RAM, especially in microservices architectures.

Practically, this translates into AI nodes with at least 256–512 GB RAM for modest workloads and 1 TB or more for large, multi tenant LLM deployments.

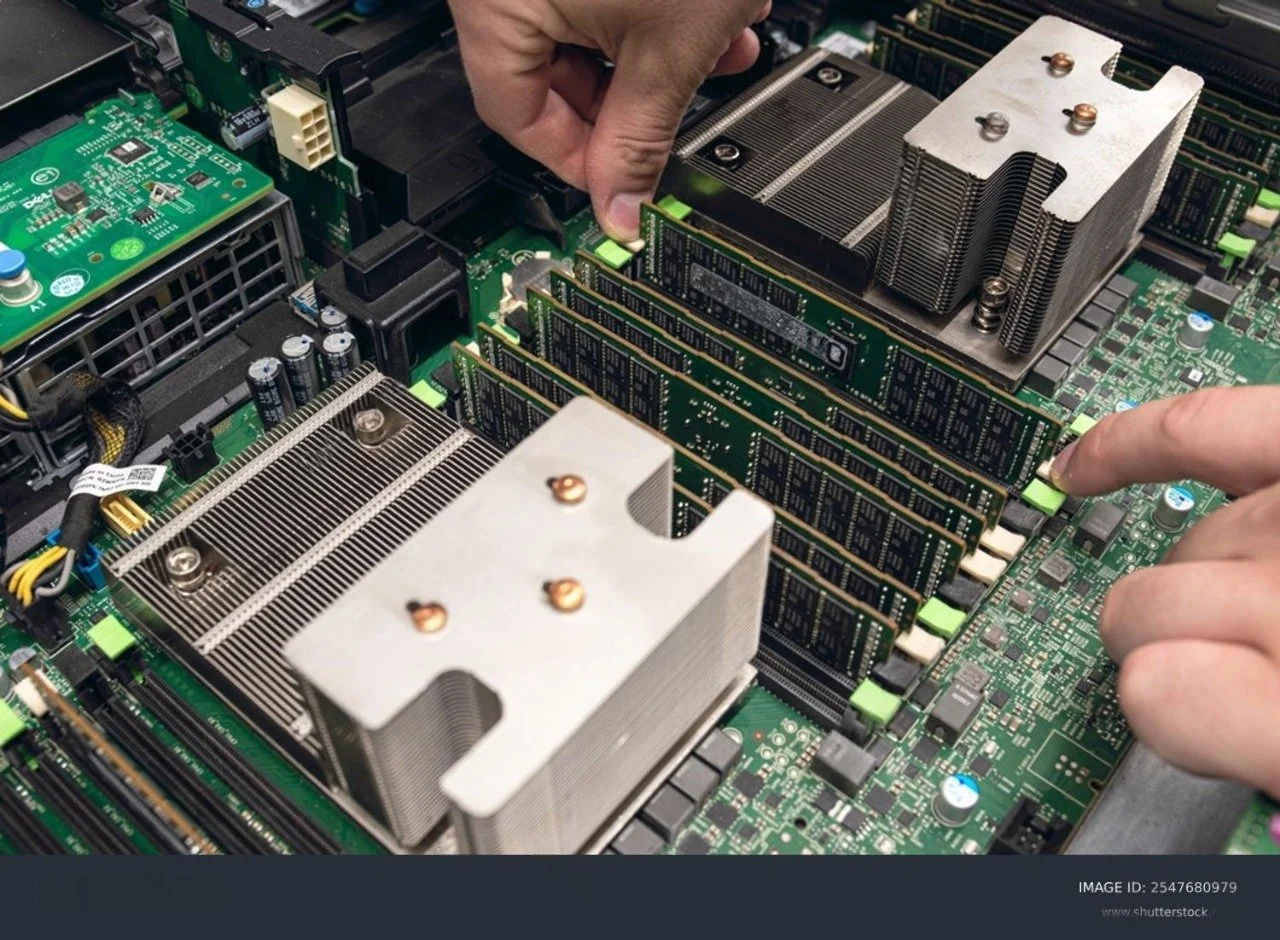

RAM Exchange supplies high density DDR4 and DDR5 server memory (for example 32 GB, 64 GB, 128 GB modules) that data centers use to reach these capacities without exhausting DIMM slots.

HPC RAM Requirements: Bandwidth and Topology Matter

For HPC, memory planning is often more about bandwidth and NUMA topology than raw capacity alone.

Key points from HPC research:

Many HPC applications are bandwidth bound rather than compute bound, meaning performance scales with how fast data can move between DRAM and CPUs.

Studies show that achieving near peak memory bandwidth on modern CPUs may require using many cores, which increases power consumption 6–10 times compared with the DRAM alone.

Oversubscribing cores relative to memory bandwidth can yield diminishing returns and energy inefficiency.

Implications for planning:

Use multiple memory channels and populate them symmetrically to maximize bandwidth per socket.

For NUMA systems, keep memory local to the sockets driving the heaviest workloads to reduce cross socket traffic.

Consider memory bandwidth per core as a first class metric when designing HPC nodes and sizing RAM per node.

How RAM Exchange Supports Data Center Memory Planning

Effective server RAM planning requires reliable access to quality memory modules. RAM Exchange offers:

Enterprise server memory procurement

High-capacity modules for AI and HPC workloads

Advice on module selection based on workload demands

Scalable inventory and delivery solutions

By partnering with RAM Exchange, data centers can accelerate deployment timelines and reduce procurement uncertainty.

Treat Server RAM Planning as a First Class Design Discipline

Server RAM planning for AI and HPC workloads is a multi-dimensional challenge. It requires a deep understanding of how memory interacts with CPUs, accelerators, and distributed frameworks. Accurate workload profiling, compatibility validation, and forward-looking procurement strategies are essential for delivering performance, reliability, and cost control.

For data centers in the United States facing complex memory demands, working with RAM Exchange can make planning and procurement far more predictable. Whether sourcing high-capacity DIMMs for AI models or balancing HPC RAM requirements, RAM Exchange supports strategic memory planning. Get in touch for specialized assistance by contacting us.

With disciplined planning and trusted suppliers, data centers can meet the evolving demands of AI and HPC infrastructure with confidence.

Frequently Asked Questions

1. What is server RAM planning?

Server RAM planning is the process of determining memory requirements, capacity, bandwidth, and compatibility for servers based on workload demands, performance objectives, and future scalability.

2. How does AI RAM planning differ from traditional memory planning?

AI workloads often require much higher memory capacity, larger buffers for distributed training, and stronger bandwidth performance, making RAM planning more critical for overall system efficiency.

3. What role does memory bandwidth play in HPC workloads?

Memory bandwidth determines how fast data moves between memory and processors. In HPC, high bandwidth is essential to feed compute units efficiently and prevent performance bottlenecks.

4. Should data centers standardize on one RAM type?

Standardization simplifies inventory and maintenance, but mixed environments may require different memory configurations based on specific workload requirements.

5. How can data centers future-proof memory investments?

Future-proofing involves selecting scalable architectures, planning for next-generation memory technologies like DDR5, and aligning procurement with anticipated workload growth.

6. Why choose RAM Exchange for server memory procurement?

RAM Exchange offers a wide selection of enterprise memory modules, procurement expertise, scalable delivery options, and guidance tailored to complex AI and HPC infrastructure requirements.